Once you’ve uploaded your texts, you can see them under “Documents”: For our example, we’ve imported a couple of cleaned Wikipedia articles about various West African dishes. The files should be in plain .txt format and contain nothing but the text. Then, go to the “Import” dropdown menu and select “Documents” to upload your files. Once you’ve logged in, create a new project by clicking on the “Create Project” button. Want to see the tool in action? Let’s take a closer look! Using the Annotation Tool: A Walkthrough Example The tool helps you coordinate your team’s work by setting up standard questions and assigning members sets of documents. Once you have signed up as an admin, you can set up projects, upload documents, and invite team members. You can use Haystack’s annotation tool locally via Docker, or in the Chrome browser.

How can you tell whether your QA pipeline will properly cover your customers’ questions if you’re only evaluating it on an off-the-shelf dataset? To best measure your system’s performance for your use case, you’ll need a dataset that resembles your real-world data as closely as possible. The amount of data needed for fine-tuning is, thankfully, much smaller than the data required to train a model from scratch.Īpart from training, customized datasets are also useful for evaluating your systems. The good news is that you can employ a technique called “domain adaptation”: You take a model that’s been pre-trained on a large general QA dataset like SQuAD (the standard dataset for question answering) and fine-tune it with your own data. And if your question answering model is specific to a certain use case - say, financial statements or legal documents - you’ll be hard-pressed to find datasets that perfectly suit your needs. With so many curated datasets out there, why should you still annotate your own data? That’s because any machine learning model is only as good as the data it’s trained on. Done! Your dataset is then ready to be exported in the SQuAD format, which can be used directly to fine-tune or evaluate a question answering model. Simply upload your documents, add questions, and mark your answer spans. It’s free to use and allows you to seamlessly coordinate work between team members. We’ve designed the Haystack annotation tool to assist you in your annotation process. While it’s relatively easy to add labels to documents, annotating large answer spans is a more involved process. Of course, that increases the complexity of the annotation task. In the context of question answering, labels are swapped for answer spans. Clear and comprehensive annotation guidelines facilitate the annotator’s job. When annotating, you’ll struggle through cases where multiple labels might fit an example - or none at all. Real-world complexities often prevent neat categorization into discrete labels.

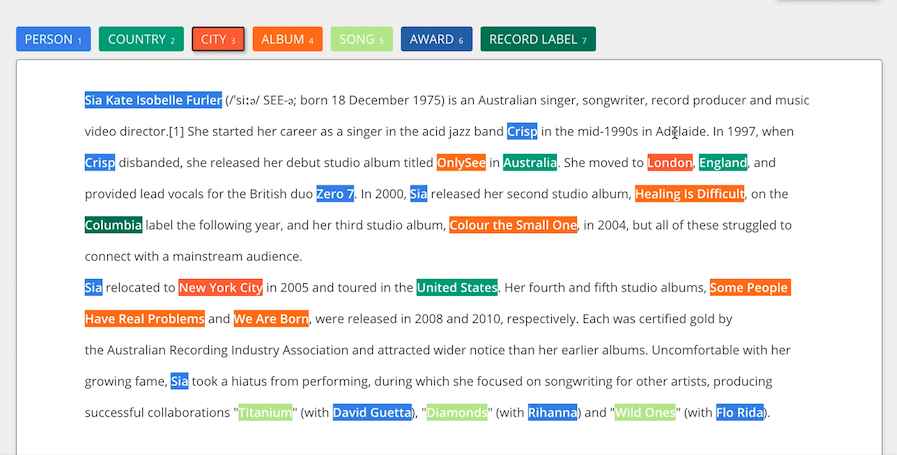

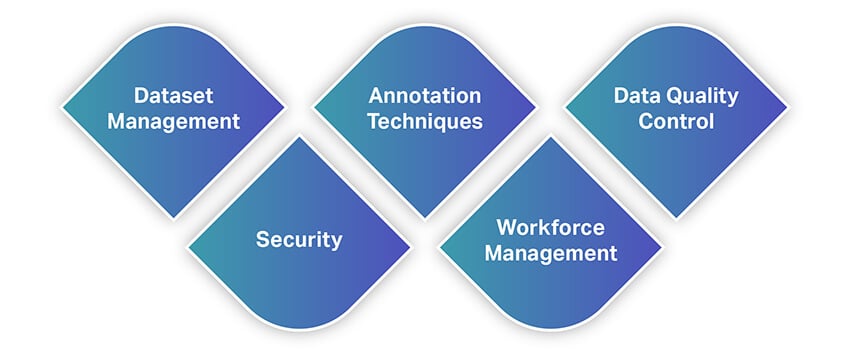

But anyone who has annotated would say otherwise. Annotating Natural LanguageĪnnotation sounds like an easy enough task: simply assign a label to a sentence, paragraph, or document. It involves identification of raw data, e.g., images, videos, text documents, and assigning labels to that data so that the machine learning model can properly interpret the context, learn from it and make accurate prediction during the inference process.įor example, labels might ‘tell’ whether an image contains a dog or train, which words an audio recording consists of, or if a text file contains answers to a specific question.ĭata labeling is needed for many use cases where machine learning is involved, such as computer vision, speech recognition, or natural language processing. What is Data Labeling?ĭata labeling (or ‘annotation) is one of the tasks necessary when developing and maintaining a machine learning model. Read on to learn more about Haystack annotation tool and how to use it. Haystack provides a free annotation tool to assist you in creating your own question answering (QA) datasets, making the process quicker and easier. But creating high-quality datasets from scratch is a tedious and expensive process. Supervised models depend on labeled data for both training and evaluation. This is especially true for Transformer-based neural networks, which are particularly apt for solving natural language tasks - be it question answering (QA), sentiment analysis, automated summarization, machine translation, or text classification. Without annotated datasets, there would be no supervised ML. If you’re interested in natural language processing (NLP), then you are probably aware of the importance of high-quality labeled datasets to any machine learning model.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed